Your Doctor’s Favorite AI Has Never Been Tested

In January 2026, OpenEvidence — an AI-powered medical search engine that physicians use to answer clinical questions at the point of care — raised $250 million at a $12 billion valuation, making it the most valuable healthcare AI company in the world. More than 40% of U.S. physicians use the platform. It handled 18 million clinical consultations in December alone. Over 100 million Americans were treated last year by a doctor who used it.

Here's what's remarkable about those numbers: no one has adequately evaluated whether OpenEvidence is safe. A systematic review submitted to npj Digital Medicine, currently in preprint, put it bluntly: "The rapid adoption of OpenEvidence among clinicians outpaces the sparse research available."

A tool used daily by 430,000 doctors, across 10,000 hospitals, in a country that spent $4.9 trillion on healthcare last year — and the evidence base for it wouldn't survive a journal club.

A crowded market with an empty scoreboard

OpenEvidence isn't the only company offering AI-powered clinical decision support to doctors:

Doximity — whose platform reaches 85% of U.S. physicians, and whose HIPAA-compliant text messaging system I frequently rely on — offers DoxGPT, which had 300,000 unique clinician users in just the last three months of 2025.

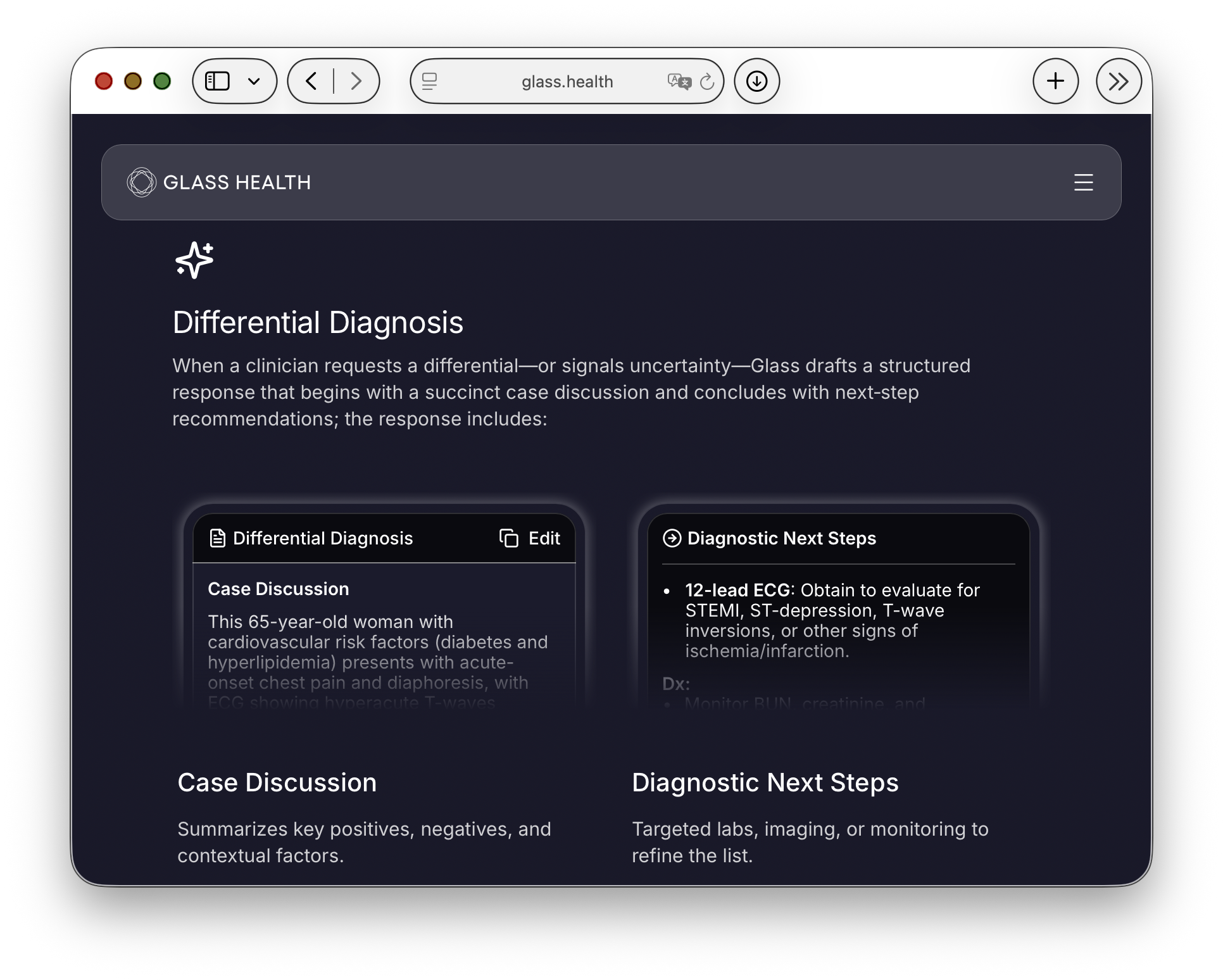

Glass Health, backed by Y Combinator, offers AI-generated differential diagnoses and clinical plans from patient summaries.

Vera Health offers AI-powered clinical answers with direct links to peer-reviewed sources.

UpToDate — long the gold standard for clinical decision support — launched its generative AI product, Expert AI, in September 2025.

Every one of these platforms claims to be evidence-based. Every one of them cites partnerships with prestigious journals and institutions. Every one of them says it's built for safety.

Not one of them appears to have ever been independently tested against the others. I was unable to find any study — not one — that takes OpenEvidence, DoxGPT, Glass Health, Vera Health, and even two or three of their competitors, gives them all the same panel of clinical cases, and tells doctors which one performs best.

What's worse: as we'll see, even the most widely used of these tools has only the thinnest evidence base for accuracy — and the rest appear to have none at all.

How good is each medical AI? We don't know.

For OpenEvidence — the most widely used of these tools — the answer is barely. The npj Digital Medicine preprint found that existing studies were small, narrow, and confined to specific clinical domains. Accuracy varied in complex scenarios. The platform often reinforced rather than altered clinical decisions — which means we don't really know whether it's helping doctors get the right answer, or just telling them what they already think.

For DoxGPT, Glass Health, Vera Health, and the rest? I was unable to find any comparable body of independent evaluation. Even UpToDate — the most trusted name in clinical decision support for three decades — has not published accuracy benchmarks for its new Expert AI product. When asked about accuracy by Newsweek, Wolters Kluwer's chief medical officer said it's been "pretty good." A spokesperson added: "We don't have shareable benchmarks yet." The product is already available to 250,000 clinicians.

A Sermo survey of physicians found that 44% cited accuracy and misinformation risk as their top concern about tools like OpenEvidence. Another 37% said peer-reviewed validation was the single factor that would most encourage adoption.

Doctors are asking for the evidence. Nobody is producing it. But 40% of doctors are still using it — including me sometimes, I'll admit, though probably less after researching this post.

Which medical AI is best? We don't know that, either.

The closest thing to a head-to-head comparison: Doximity published a side-by-side evaluation of its own DoxGPT against OpenEvidence, the traditional UpToDate online reference (not the newer Expert AI), and ChatGPT. More than 1,000 physicians evaluated the answers, and DoxGPT was selected as the "best clinical answer" at more than twice the rate of OpenEvidence. But that study asked physicians to pick which answer they preferred — with no independent gold standard, no expert panel adjudicating correctness, no measurement of whether either answer would have led to a safe outcome. Preference and accuracy are different things. It was also Doximity's own study, conducted on its own platform.

Doximity has since launched PeerCheck, co-chaired by Eric Topol and former Surgeon General Regina Benjamin, to bring physician peer review to DoxGPT outputs. That's a step in the right direction — but it's still one company's product, reviewed by that company's advisors.

The Hugging Face Open Medical-LLM Leaderboard at least attempted to maintain a running, independent comparison of models on standardized medical benchmarks. It has since gone dormant — a casualty of the broader Open LLM Leaderboard's retirement. The benchmarks, Hugging Face acknowledged, were becoming obsolete.

That's it. That's the evidence base for the tools doctors are using to make clinical decisions right now.

Why this is allowed

Imagine a pharmaceutical company told you its new cardiac medication was used by 40% of cardiologists and had never been tested in a large-scale, independent trial — just a handful of small retrospective studies, most of which found the drug "reinforced" existing treatment choices rather than improving them.

The FDA would never approve it. But that's exactly where we are with medical AI. And the regulatory framework makes it possible.

Under the 21st Century Cures Act, clinical decision support software is exempt from FDA regulation — provided it allows the physician to "independently review the basis" of its recommendations. In January 2026, the FDA further loosened this, clarifying that even software providing a sole medical recommendation can be exempt, as long as the clinician can evaluate the underlying logic.

In practice, this means that the AI platform your doctor consulted before writing your prescription went through less regulatory scrutiny than the prescription itself.

What needs to happen

This isn't an argument against medical LLMs. These tools are very likely better than a physician relying on memory, a five-year-old UpToDate article, or a hurried Google search between patients.

But "very likely better" is not a standard we accept anywhere else in medicine. We need:

An independent, regularly updated benchmark that tests OpenEvidence, DoxGPT, Glass Health, Vera Health, and their competitors on the same clinical case panels, scored by the same physician panels, on safety and accuracy alike.

Head-to-head trials with clinical endpoints. Not just "did the AI give a correct answer?" but "did patients whose doctors used Platform A have different outcomes than patients whose doctors used Platform B?"

Transparent reporting of safety data. If Doximity can mobilize 10,000 physician experts for PeerCheck, an independent body can do the same — across platforms.

These tools are reshaping how medicine is practiced in America right now. The question isn't whether AI should be in the exam room — it already is. The question is whether we're going to evaluate it like a medical intervention, or keep treating it like Microsoft Word.

For a profession built on evidence-based medicine, the answer should be obvious.