Notes: 14 Million Appointments That Didn't Happen

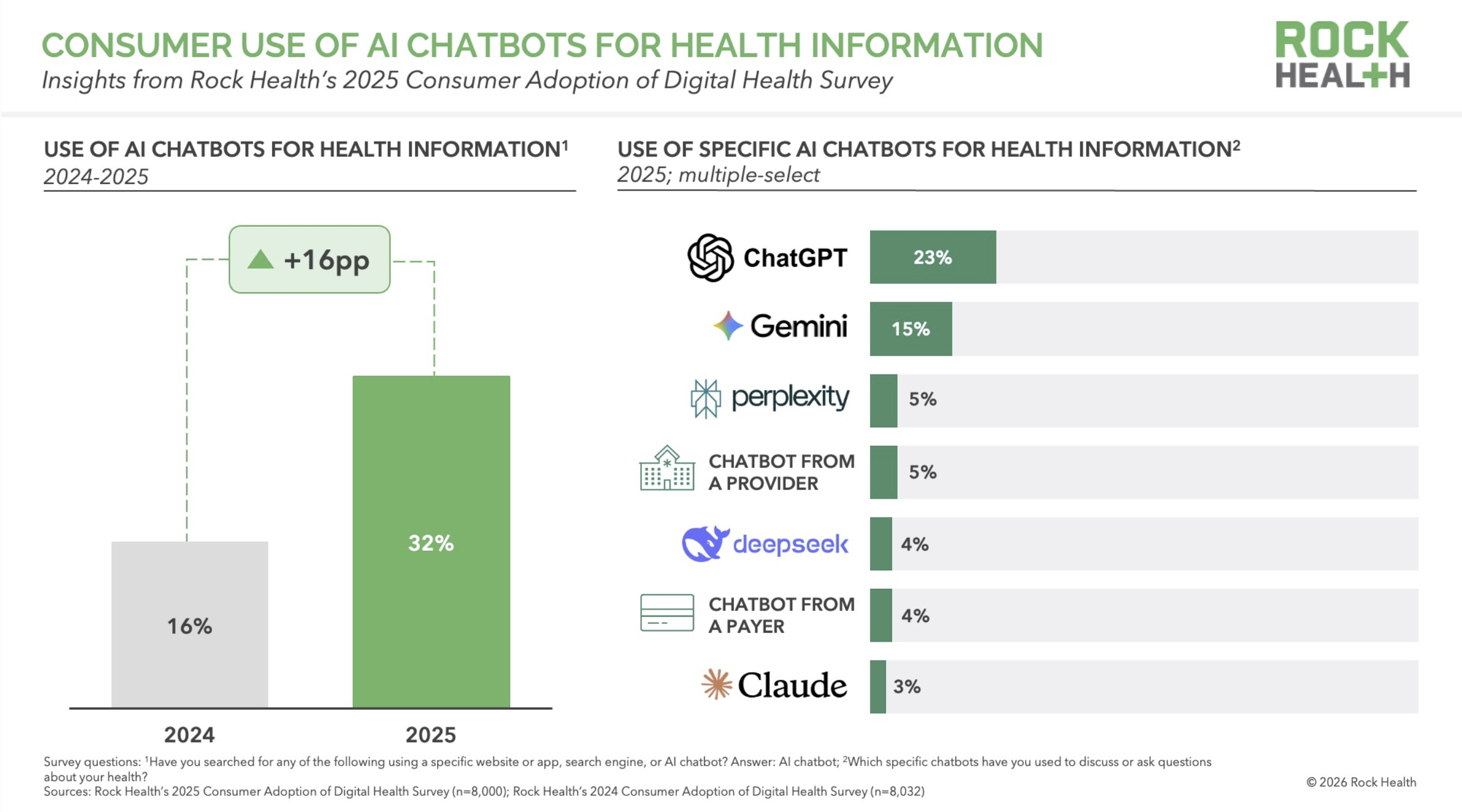

Last week I wrote about an 18% figure from Rock Health — the share of AI chatbot users who say they have adjusted their own prescription medications based on AI advice. The day before that, I wrote about Rock Health's finding that 32% of U.S. adults now use AI chatbots for health information. ALSO last week, I flagged a new Gallup/West Health projection: 14 million U.S. adults skipped a provider visit in the past 30 days after receiving AI-generated health advice.

Two separate surveys, two separate research teams, two separate samples. One phenomenon.

Patients are doing things outside the healthcare system that the healthcare system used to do for them. They are looking up symptoms, adjusting medications, interpreting results, and deciding whether to come in at all. And the system they are routing around has no way to see any of it.

The Gallup data is the most important of the three for anyone doing strategic planning. Rock Health told us patients are using AI. Gallup tells us patients are substituting it for visits, at scale, right now.

The numbers

Gallup poll

Gallup surveyed 5,500 U.S. adults between late October and late December 2025. 25% said they had used AI for health information or advice. Among recent users, 14% said the AI advice led them to skip a provider visit in the past 30 days. Gallup projected this to roughly 14 million U.S. adults.

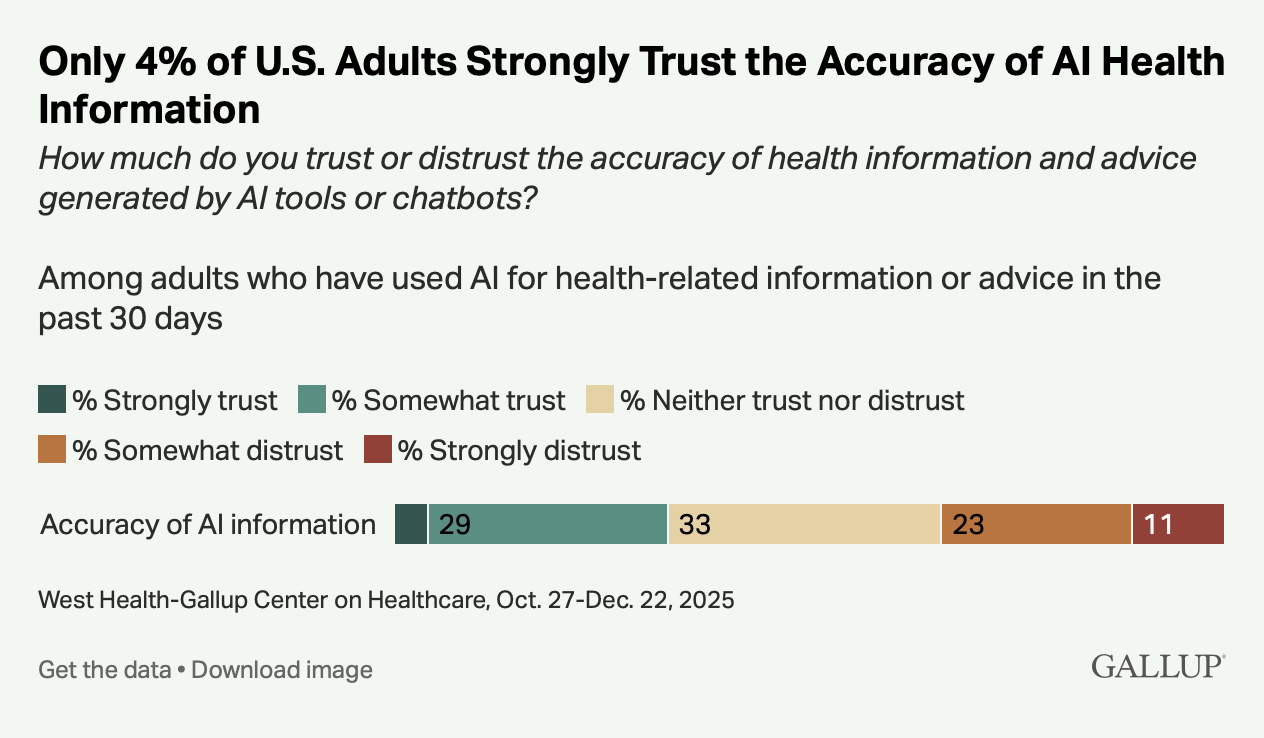

The other findings:

59% of recent AI users research before visiting a doctor

56% research after visiting a doctor

14% used AI because they couldn't pay for a visit

16% because they couldn't access a provider

21% because they felt dismissed by a provider in the past

Only 4% strongly trust the accuracy of AI health information

That last number is the most important.

The trust floor

Patients are not skipping visits because they believe AI is better than their physician. 96% of users do not strongly trust AI health information. They are using it anyway, and some are making care decisions based on it anyway, because they believe the alternative is worse (!).

This is the demand-side mirror of a pattern I've written about from the supply side. Ganguli, Lee, and Mehrotra documented a 6–25% decline in primary care visits from 2008 to 2016 across five independent data sources. In their 2019 JGIM paper, they proposed three mechanisms: reduced patient ability or desire to seek care, shifts in how primary care itself is delivered (teams, non-face-to-face modalities), and patients going elsewhere. That "elsewhere" included specialists, urgent care, retail clinics, and telemedicine — and, explicitly, self-care:

"Growing access to Internet-based health information, symptom checkers, and virtual patient communities may preclude seeking formal medical care altogether."

That was 2019. Pre-ChatGPT. Pre-GLP-1 consumer access. Pre-most of what we now call consumer health AI. Ganguli and colleagues had already identified online self-directed care as a driver of the visit decline — a formal entry in their mechanism table, under "Self-care."

What the Gallup data now captures is that same behavior, accelerated by tools vastly more capable than the 2013-era symptom checkers Ganguli mentioned. The 14 million skipped visits aren't a new phenomenon. They're the continuation of a trend Ganguli had already identified seven years ago, now running on dramatically better infrastructure.

What claims data misses

“Growing access to Internet-based health information, symptom checkers, and virtual patient communities may preclude seeking formal medical care altogether.”

A visit that never happens generates no CPT code, no RVU, no line item. Nothing enters the revenue cycle. Nothing enters utilization reports. Nothing enters the demand forecasts that health systems, payers, and workforce planners use to project capacity three and five years out.

The AAMC's 86,000-physician shortage projection assumes current utilization patterns hold and extrapolates forward. If 14 million monthly visits are already gone — and the Rock Health and Gallup data suggest the number is growing — the projection's baseline is wrong. The shortage may be smaller than estimated, or may be in different specialties than estimated, or may reverse entirely in some categories. We don't know, because the data that would tell us doesn't exist in the places we look for it.

This is the $50B blind spot, updated. The 2008–2016 version was a decline in primary care visits that claims-based analyses could not fully measure. The 2025–2026 version is a decline in everything AI can plausibly substitute for: symptom interpretation, medication questions, follow-up clarification, diagnosis research. Gallup found that patients are using AI for all of these.

How big is 14 million, really?

The U.S. generates roughly 1.07 billion physician office visits per year, according to the most recent NAMCS data — about 89 million per month. Gallup's 14 million represents about 16% of monthly visits, displaced by AI.

That's the context for the 14 million number. It isn't a niche phenomenon. It is a sixth of current monthly physician office volume, gone, already, with no claims data to show for it.

The clinician view is catching up

When I posted the Gallup data on X last Tuesday, the response that's stayed with me came from Dr. Martha Gulati, a preventive cardiologist at Cedars-Sinai: "This is remarkable but also true. This is why AI needs to be validated and the medical community needs to be a part of this to ensure we are meeting patients where they are. Which is on these apps, SoMe and the internet."

That's the right instinct, and it's worth taking seriously. But it also touches on the hardest operational problem in this whole picture. Validation, as the medical community has traditionally practiced it, is a process that takes years. The tools patients are using change weekly if not daily. ChatGPT in April 2026 is a meaningfully different product than it was in October 2025. By the time a rigorous validation study on a specific chatbot is designed, funded, run, peer-reviewed, and published, the product has shipped a dozen new versions.

The medical community's instinct is to slow things down until the evidence is in — but the patients aren't waiting. Whatever validation looks like in this environment, it will have to happen at consumer-software speed — or it won't happen at all.

What health system strategy should do about it

Two things.

First, stop treating projected visit volume as a reliable base for capital and capacity planning. It is not a reliable base. It is the output of a model that misses a significant and growing share of what patients are doing.

Second, improve the model by measuring that share (i.e. what patients are doing outside the system). This is harder, but by no means impossible. Patient-reported utilization surveys, employer health plan data, and direct consumer research can fill some of the gap that claims data cannot. If your strategy is relying on claims data alone, your strategy is flying blind on the most important variable.